How to Build an MVP: Complete Guide (Including AI MVPs in 2026)

- Riya Thambiraj

- Artificial Intelligence

- Last updated on

Key Takeaways

AI is attracting massive funding but most AI startups and MVPs fail because of unclear problems, weak data, and poor execution.

A traditional MVP is a small, production-ready product that tests one core assumption with real users and real data.

Prototypes and MVPs are different; prototypes test concepts cheaply while MVPs validate product market fit in real conditions.

AI MVPs differ from traditional MVPs because they depend heavily on data quality, model performance, monitoring, and compliance.

Building an MVP first reduces cost and risk, speeds up validation, and gives investors and stakeholders a working product to trust.

An effective AI MVP focuses on one core AI feature, a small but clean dataset, and iterative testing with real users before scaling.

Choosing the right sourcing model in-house, partial outsource, or full outsource affects speed, cost, IP ownership, and long-term maintainability.

Key success factors for AI MVPs include strong data strategy, user centric design, secure and compliant architecture, and robust integrations.

AI MVP costs vary widely based on data, model complexity, infrastructure, and talent, so careful scoping and tool selection are critical to avoid waste.

AI has become the centerpiece of global innovation. In the first half of 2025 alone, $59.6 billion, more than 53% of total global venture funding, was invested in AI startups. Investors are backing teams that can turn AI-driven ideas into working MVPs fast.

Yet the reality is sobering. The failure rate for AI startups is estimated at 90%, far higher than traditional tech ventures. Most stumble due to unclear market needs, lack of defensible data assets, or over-reliance on third-party foundation models. In other words, funding alone is not enough.

The difference between success and failure often lies in how the MVP is built. In 2025, 9 of the 11 $100M+ mega-deals in digital health went to startups that applied AI to industry-specific problems, not those chasing general-purpose AI. Targeted, practical MVPs are winning investor trust.

But even at the MVP stage, risks are high. Studies show that around 75% of AI MVPs fail to deliver ROI because of unclear objectives, unreliable data pipelines, poor integration, or the inability to scale beyond pilots.

Our Perspective

We help startups and enterprises avoid these pitfalls by building lean, scalable AI MVPs that deliver measurable outcomes from day one. We have been working in the AI space for almost 2 years, building prototypes that have now matured into full-featured products across industries.

Who Should Read This Article?

This article is for startup founders, co-founders, digital agencies, enterprises, project managers, VPs, and digital marketing leads who are exploring AI-powered product development. If you are considering an AI MVP, this guide will help you:

Understand the core differences between AI MVPs and traditional MVPs

Learn the step-by-step process of AI MVP development

Anticipate challenges and hidden costs before they derail your project

Choose the right tools, data strategies, and development partners

Why We Wrote This Guide?

We wrote this article because too many AI MVPs fail unnecessarily. By sharing a structured AI MVP development guide, we want to give you practical, real-world insights to validate faster, scale smarter, and maximize ROI from your AI initiatives.

Before we get into the AI-specific path, it's worth establishing the foundation. If you're new to MVP development, or want to revisit what the term actually means in a software context, the next section covers it clearly.

What Is MVP in Software Development?

A Minimum Viable Product (MVP) in software development is the simplest version of your product that can be released to real users. It contains only the core features needed to validate one specific assumption — does this solve a real problem for real people? — without building the full product first.

The concept comes from Eric Ries's lean startup methodology: build the smallest thing that generates learning, measure what happens, and learn fast enough to steer before you run out of time or money. The MVP isn't the finished product. It's the fastest path to evidence.

In software development specifically, an MVP means production-ready code — not a prototype, not a Figma file, not a demo. Real users interact with it. Real data comes back. That data either validates the direction or tells you to pivot before you've committed twelve months of runway to the wrong thing.

Some of the most successful products started as MVPs with almost nothing:

Airbnb launched as a simple website where the founders rented air mattresses in their own apartment. No payment system, no global listings. Just enough to test whether strangers would pay to sleep in someone else's home.

Dropbox shipped a demo video before a single line of product code was written. Sign-ups validated demand. Then they built.

Spotify launched in a single European market with a desktop-only app and a limited music library to test streaming behavior before investing in licensing at scale.

None of these were polished. All of them answered the question that mattered.

For founders evaluating MVP development services, the decision isn't whether to build an MVP, it's how to scope it tightly enough that the first build tests your actual core assumption rather than your entire product vision.

What an MVP is not:

It's not a rough build with bugs excused by "it's just an MVP"

It's not a prototype (no real code, no real users — more on this distinction below)

It's not a beta (MVP comes before broad release; beta comes after)

It's not your full product with features removed

The minimum in MVP refers to scope. Not quality. The code you ship in an MVP is the foundation of the product you'll scale. Build it like it matters because it does.

One question founders get wrong more often than almost anything else: is what I'm about to build an MVP, or is it a prototype?

They're not the same thing. And confusing them is one of the most expensive mistakes you can make at the early stage. Let's clear that up.

MVP vs Prototype: Differences and When to Use Each

Most founders use "MVP" and "prototype" interchangeably.

They're not the same thing, and treating them as the same leads to one of two expensive mistakes: building a full MVP when a prototype would have answered your question for a tenth of the cost, or shipping a prototype to real users and discovering it can't handle production load.

Here's the practical difference:

| Prototype | MVP | |

|---|---|---|

| What it is | A simulation of the product | Working production software |

| Code behind it | None or throwaway | Real, deployable code |

| Users | Internal team, investors | Real end users |

| Purpose | Validate concept, test design, secure funding | Validate product-market fit with real behaviour |

| Data generated | Qualitative (reactions, design feedback) | Quantitative (usage, retention, conversion) |

| Typical timeline | 1–3 weeks | 6–8 weeks |

| What follows it | MVP build decision | Iteration or pivot |

When to Start With a Prototype

Start with a prototype when you need to answer a question about design or concept before committing to a build.

You're pre-seed and uncertain whether the core idea resonates.

You need to raise funding and want something tangible beyond a slide deck.

Or you're technically uncertain and need to know whether the architecture is feasible before scoping the full build.

A well-built prototype can unlock a funding conversation that a Figma file can't. We build interactive, investor-ready prototypes in 1–3 weeks when founders need validation before the full MVP commitment. See our startup MVP development page for how the two stages connect.

When to Go Straight to MVP

Go straight to an MVP when you've already validated the concept through customer conversations, previous products, or market evidence.

At this stage, what you need is real user behavior data. You're ready to test whether people will actually use and pay for the product, not just say they would.

The rule of thumb: if your biggest uncertainty is "will users want this?" start with a prototype. If it's "will users keep using this?" build the MVP.

Now that you're clear on the difference between a prototype and an MVP, the next question is: what changes when your product is powered by AI? The answer is more significant than most founders expect and it starts at the definition level.

What Is an AI MVP?

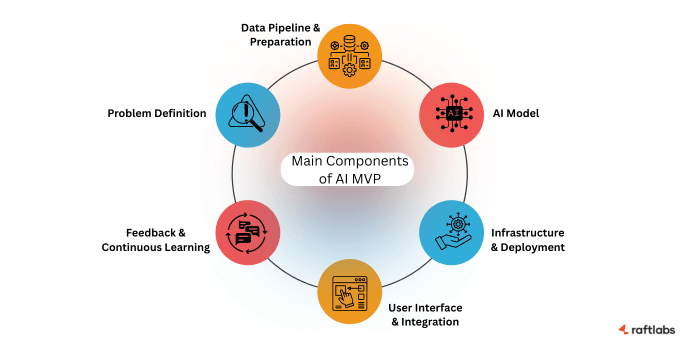

An AI MVP (Minimum Viable Product) is a functional, pared-down version of your application that integrates artificial intelligence to solve one core problem. It’s designed to validate the product’s value before you commit to a full-scale investment.

The focus of AI MVP development is not on building every feature, but on showing how AI adds measurable value. With just enough data, automation, and functionality, it allows you to test with real users in real conditions.

Unlike a traditional MVP, an AI-powered MVP requires attention to data quality, model performance, and seamless integration with existing systems. The aim is to prove that the AI works reliably in practice, not just in theory.

An AI MVP helps you test assumptions early, reduce risks, and build confidence in your product vision before scaling further.

Understanding what an AI MVP is sets the context. But the real difference shows up when you put it side by side with a traditional MVP because the two follow fundamentally different rules across almost every dimension. Here's the comparison.

AI MVP vs Traditional MVP: Key Differences and Advantages

While both approaches aim to validate ideas quickly, AI MVP development follows a very different path compared to traditional MVPs. The distinction lies not only in technology but also in how data, iteration, and scalability are handled.

| Aspect | Traditional MVP | AI MVP Development |

|---|---|---|

| Core driver | Functional feature set validation | Data-driven functionality and AI model performance validation |

| Role of data | Supportive, not central | Foundational — AI outcomes depend on quality and availability of data |

| Improvement cycle | Iteration based on user feedback | Continuous data/model retraining + user + data feedback |

| Complexity | Lower: basic logic, UI, and user flows | Higher: requires data pipelines, model training infra, explainability, monitoring |

| Scalability | Scale with users and additional features | Scale with larger datasets, retraining cycles, infra optimization, drift control |

| Validation metrics | Adoption, usability | Accuracy, precision, recall + adoption and usability |

| Risks | Adoption gaps, usability issues | Data bias, compliance, ethical risks, security, model drift |

1. Core Driver

Traditional MVPs focus on delivering a functional feature set to test usability and product-market fit. An AI MVP is centered on validating whether the AI model itself can deliver measurable value, often more critical than the feature set.

2. Role of Data

In a traditional MVP, data supports features but is not central to success. In AI-powered MVP development, data is the foundation. The quality, volume, and relevance of data directly determine whether your AI can generate useful and accurate outputs.

3. Path to Improvement

A traditional MVP improves through user feedback that guides future features. An AI MVP evolves through continuous model retraining, improved datasets, and validation cycles. Feedback is both user-driven and data-driven, making iteration more complex but also more powerful.

4. Complexity of Build

A traditional MVP may require basic logic, clean UI, and smooth user flows. An AI startup MVP development effort requires data pipelines, training infrastructure, monitoring tools, and explainability layers. This higher complexity demands early planning around architecture and scalability.

5. Scalability Approach

Scaling a traditional MVP is mainly about adding features and handling more users. With AI MVP development, scalability means planning for larger datasets, faster retraining cycles, stronger infrastructure, and continuous monitoring of model performance to avoid drift.

6. Testing and Validation

Traditional MVPs measure usability and adoption. AI MVPs test predictive accuracy, precision, recall, or other model-specific KPIs. These metrics must be validated alongside user adoption to confirm whether the AI is genuinely improving outcomes.

7. Risk Considerations

In traditional MVPs, risks are usually about adoption or usability gaps. In AI MVPs, risks also include bias in datasets, ethical issues, compliance, and security. These must be addressed at the MVP stage to avoid rework and reputational damage later.

Treating an AI MVP like a regular MVP with added AI features misses the point. The real focus is on validating data quality, model reliability, and long-term scalability.

Check out our AI MVP development services if you want help in building your MVP to turn your vision into reality.

The differences between the two approaches make clear why building an AI MVP isn't just "building an MVP with AI bolted on."

Each approach has its own set of advantages. Understanding those advantages is what helps you decide which path is right for your product.

Let's look at both.

Benefits of Building an MVP First

MVP development is not just about testing ideas quickly. It’s a disciplined approach that helps you validate your concept, strengthen your data strategy, and reduce both technical and business risks before committing serious resources. Here are the key benefits:

- Faster Market Validation

- Reduced Cost and Risk

- Smarter Product Iteration

- Investor and Stakeholder Confidence

Let us break down each benefit to understand more clearly.

1. Faster Market Validation

With an MVP, you release only the features that prove your concept. Instead of spending months building a full solution, you can launch a simplified version, gather real usage data, and validate whether your AI-driven approach truly meets user needs.

2. Reduced Cost and Risk

AI projects often require significant investment in infrastructure, data pipelines, and model training. Building an MVP first helps you contain costs by focusing only on core essentials.

This reduces the risk of overspending on features or models that the market may reject, and helps you focus only on essential features for your custom software development as per the business and user requirements.

3. Smarter Product Iteration

AI thrives on real-world data. By launching an MVP, you begin collecting authentic user interactions that improve your model accuracy over time.

This feedback loop ensures that every iteration is more relevant and aligned with actual user behavior rather than hypothetical assumptions.

4. Investor and Stakeholder Confidence

An AI-powered MVP gives you something tangible to show. Investors and stakeholders respond better to working products than presentations.

Demonstrating early traction like improved efficiency, customer engagement, or predictive accuracy, makes it easier to raise funding and secure executive support.

Check out: Our AI Development services to build your tailored AI powered product.

Advantages of an AI-Powered MVP

The benefits above apply to any MVP traditional or AI. But when your product is powered by machine learning, LLMs, or AI automation, a specific set of additional advantages comes into play.

The benefits of building an AI-powered MVP are:

- Personalized User Experience

- Scalability Insights from the Start

- Early Data Strategy Validation

- Integration Feasibility Testing

- Compliance and Privacy Readiness

- Competitive Differentiation

- Foundation for Continuous Learning

Let us see each benefit above benefit more closely.

1. Personalized User Experience

Even a lightweight AI MVP can deliver personalization that traditional MVPs cannot.

From smart recommendations to adaptive workflows, these early features show users the value of AI from day one and help create stronger product loyalty right from the start.

2. Scalability Insights from the Start

Scaling AI models is challenging. By testing at the MVP stage, you can evaluate how your models perform with larger datasets, identify infrastructure gaps, and spot bottlenecks early.

This foresight saves significant costs when scaling to enterprise-level usage later.

3. Early Data Strategy Validation

Data is the foundation of any AI product. An MVP helps you test data pipelines, evaluate data quality, and validate how data flows in production environments.

This ensures your models are trained on reliable inputs and reduces costly rework down the line.

4. Integration Feasibility Testing

AI rarely exists in isolation. It often needs to connect with CRMs, ERPs, or custom platforms.

Testing these integrations early during the MVP phase uncovers compatibility issues, saving you from discovering expensive roadblocks after you’ve already invested heavily.

5. Compliance and Privacy Readiness

AI products face strict compliance standards in industries like healthcare, finance, and education.

An MVP allows you to address privacy, security, and governance requirements early. Validating compliance from day one builds trust with regulators, investors, and enterprise clients.

6. Competitive Differentiation

Launching an AI MVP allows you to test and refine your unique positioning in the market.

You can quickly evaluate whether your AI capability provides a measurable edge in personalization, automation, or efficiency, helping you stand out from competitors faster.

7. Foundation for Continuous Learning

AI products are never static. An MVP sets up the feedback loops your models need for ongoing improvement. This foundation allows your product to evolve naturally as data volumes grow, ensuring technical debt stays under control while accuracy improves.

In short, the benefits of AI in MVP development extend well beyond faster launches. They give you the clarity, confidence, and infrastructure needed to scale responsibly while staying aligned with real business and user needs.

With a clear picture of why the MVP-first approach works and why it works even better with AI, the logical next question is: what does the build process actually look like? We will cover the traditional path first, then the AI-specific divergence.

How to Build a Traditional MVP: Step by Step Process

Before your product has AI at its core, the MVP development process follows a tried-and-tested path. This is how to develop an MVP that ships in 6–12 weeks, validates what matters, and gives you a codebase worth scaling.

If your product is AI-powered, skim this section for the foundation — the steps below are where the two paths share the same ground. The AI MVP process diverges meaningfully at the data strategy and architecture stage, which is covered in the next section.

Step 1: Define the One Problem You're Solving

Every successful MVP solves one problem for one type of user. Not two problems. Not one problem for everyone.

Write it as a single sentence: [User type] struggles to [problem] when [context]. We solve this by [core mechanism].

If you can't write that sentence without an "and," your scope is already too wide. Founders who skip this step build MVPs that nobody can explain, including them.

Step 2: Validate Before You Build

Talk to 10–15 people in your target user group before writing a line of code. Not to pitch them. To understand how they currently solve the problem and what it costs them in time, money, or frustration.

The question you're trying to answer: is this problem real, frequent, and worth paying to solve? If the answer to any of those is "maybe," build the prototype first.

Step 3: Define Core User Stories and Prioritize Features

A user story maps what a user needs to do to get value from your product: "As a [user], I want to [action] so that [outcome]."

Once you have 10–20 user stories, run them through the MoSCoW method: Must have, Should have, Could have, Won't have. Your first MVP sprint contains only the Must-haves. Everything else is version two.

This is where product discovery pays for itself. A structured discovery sprint identifies the minimum feature set that tests your core assumption — before a single sprint is planned.

Step 4: Choose Your Tech Stack for a Scalable Architecture

The technology decisions you make in week one are still alive in year three. An MVP built on the wrong architecture doesn't just cost money to fix — it costs time during the period when you have the least of both.

For most web MVPs: React or Next.js frontend, Node.js or Python backend, PostgreSQL for relational data, deployed on AWS or Vercel. For mobile: Flutter for cross-platform unless device-specific performance demands native Swift or Kotlin.

The goal is a scalable architecture that a second engineer can inherit, not one only the original developer can explain.

If you're hiring MVP developers externally, ask before you sign: who owns the architectural decisions, and is the codebase yours on day one?

Step 5: Build in Sprints: Working Software Every Two Weeks

Sprint-based development means you have something working and testable at the end of every two-week cycle — not a reveal at week twelve. This matters because direction changes are cheapest before they've been built.

Each sprint produces tested, deployable code. You review it, feedback feeds the next sprint. The final sprint delivers the production-ready MVP — deployed, monitored, ready for real users.

Step 6: Launch to a Controlled User Group

Don't launch publicly first. Launch to 20–50 users who match your target profile. Measure what they do, not what they say. Usage data — activation rates, return visits, feature usage, drop-off points — tells you more in one week than 100 interviews.

Define your success metric before launch. What does "validated" look like? A retention rate above X%? A conversion from free to paid? A support volume below Y? If you can't define success before launch, you won't know what the data means after.

Step 7: Measure, Learn, and Decide

After your first user cohort, you reach one of three conclusions:

- Validated — users are doing what your business model depends on. Scale the build.

- Partially validated — some assumptions held, others didn't. Iterate the MVP.

- Invalidated — the assumption was wrong. Pivot the problem, user type, or mechanism.

None of these is a failure. The failure is spending six months and $200,000 on a full product before you had this data.

For a complete breakdown of MVP software development services, including team composition and what drives timelines up or down — see our MVP development page.

That's the standard path. Now, what changes when AI is at the core of your product. Quite a lot.

That’s the standard path. When AI sits at the core of your product, the process changes significantly. In the next section, we walk you through how the AI MVP development process works, where it differs from the traditional approach, and why you need to start with a clear data strategy.

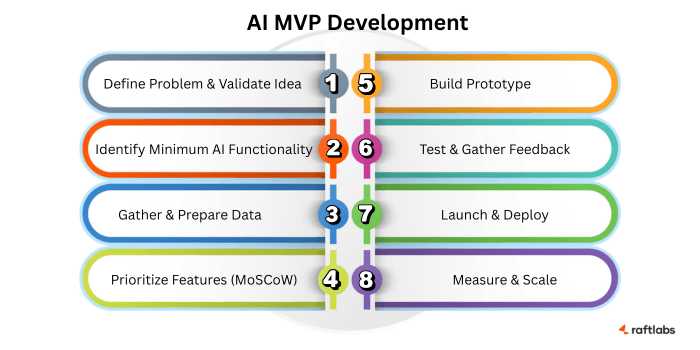

AI MVP Development in 8 Steps

AI MVP development requires a disciplined approach that balances product vision with technical feasibility. Unlike, traditional MVP Development, AI MVP models depend on data, iterative training and real-world feedback.

A clear roadmap helps you move from an idea to a working AI-powered MVP that can be validated in the market. Here is a step-by-step guide to developing a custom AI MVP for your software product.

Here’s a structured process we follow:

Step 1: Define the Problem and Validate the Idea

AI should solve a clear and meaningful problem, not just showcase technology. Before any code is written, the focus should be on identifying the exact pain point and validating whether AI is the right solution.

Questions to address at this stage:

What specific business or user problem are we solving?

How do users currently solve it, and where are the gaps?

What measurable improvement will AI bring compared to existing solutions?

Example: A logistics company wants to optimize delivery routes. The MVP hypothesis could be: “If we apply AI to real-time traffic and order data, delivery times can be reduced by 20% without increasing costs.”

Expert Tip: Many founders overestimate what AI can achieve in the MVP stage. Keep the hypothesis narrow and measurable. The goal is to validate an outcome, not to impress with complexity.

Step 2: Identify the Minimum AI Functionality

An MVP should prove feasibility with one core AI-driven feature. It doesn’t need to be fully automated or loaded with features. Often, a semi-automated or rule-based approach is enough to test value.

Questions to consider:

What is the simplest AI-powered functionality that demonstrates product value?

Can a lightweight model, rule-based system, or human-in-the-loop process validate the outcome?

Example: Instead of building a complete AI hiring platform, the MVP might be a resume parser that ranks candidates by skills and experience. Even a keyword-based system, validated with recruiter feedback, can confirm the concept.

Expert Tip: Many successful AI startups launch with human-in-the-loop workflows, where humans correct AI outputs in real time. This provides user value immediately while generating labeled data to improve the model for later versions.

Step 3: Gather and Prepare a High-Quality Dataset

AI performance depends more on data quality than data volume. For an MVP, the focus should be on starting small with clean, representative data. Collecting millions of records upfront usually wastes time and budget.

Approaches to consider:

Use open-source datasets when available.

Begin with a smaller, high-quality dataset instead of a massive noisy one.

Explore synthetic data or manually labeled samples for early experiments.

Example: An AI medical image classifier can begin with 1,000 carefully annotated X-rays instead of trying to acquire millions of images across hospitals. The smaller dataset is sufficient to validate accuracy and adoption potential.

Avoid This Mistake: Many AI startups assume “bigger is better” with data. In reality, a well-structured dataset with clear labeling is more valuable for MVP validation than an overwhelming but inconsistent dataset.

Step 4: Prioritize Features Using the MoSCoW Method

Scoping is one of the hardest parts of AI MVP development. The MoSCoW method (Must-have, Should-have, Could-have, Won’t-have) ensures that only the essential features make it into the MVP.

How to apply it:

Must-have: The single AI-driven functionality that validates your hypothesis.

Should-have: Features that add usability but are not critical to validation.

Could-have: Nice-to-have improvements that can wait until scaling.

Won’t-have: Features intentionally excluded from the MVP to avoid scope creep.

Example: For a customer support chatbot MVP, the must-have could be answering FAQs with AI. Should-haves might include sentiment detection, while features like multilingual support could be reserved for later releases.

Expert Tip: Ruthless prioritization is key. Many AI MVPs fail because teams spread efforts across multiple half-baked features instead of validating the one feature that proves business value.

Step 5: Build the AI MVP Prototype

Once the problem, functionality, and dataset are defined, the next step is creating a prototype. This is not a polished product but a working version that demonstrates how the AI will deliver value.

How to approach it:

Create a minimal but functional interface (web app, chatbot, API, or dashboard).

Start with the simplest working AI model. If necessary, use placeholders or semi-automated processes to simulate outputs.

Ensure the prototype is usable by real users, even if not fully automated.

Example: For a financial forecasting tool, the MVP could be a web app where users upload a CSV of expenses and receive AI-driven projections. Even if early forecasts rely partly on human input, the workflow can validate product-market fit.

Expert Tip: Keep the prototype lean. Overinvesting in polished design or advanced UX at this stage often leads to delays. The priority is to validate the AI’s usefulness, not aesthetics.

Step 6: Test and Gather Feedback

The value of an AI MVP lies in how users respond to it. Testing with real users provides insights not only about AI accuracy but also about usability and adoption potential.

Key considerations:

Deploy the prototype to a closed beta group or selected early adopters.

Collect quantitative data such as accuracy, precision, recall, and latency.

Gather qualitative feedback on ease of use, clarity of results, and overall experience.

Track where AI predictions fail and document how users compensate.

Example: A customer service chatbot MVP should be tested with real support queries. Success is measured not just by response accuracy but also by whether users trust the AI and continue engaging with it.

Expert Tip: Feedback should guide the next iteration. Many startups waste time tweaking models endlessly. Instead, focus on whether the AI solves the business problem, even if performance metrics are imperfect at the start.

Step 7: Launch and Deploy

If early tests show promise, the next step is deploying the AI MVP to a wider audience. This stage is about exposing the product to real-world environments where usage is less predictable.

Deployment checklist:

Roll out gradually, starting with a limited user group, then expanding.

Monitor both infrastructure (uptime, latency, scaling) and AI-specific performance (accuracy, drift).

Provide clear support channels for users to report issues or inaccuracies.

Example: An AI-powered resume screener might first be deployed to one recruitment team in a company before scaling to all departments. This phased rollout helps refine the product without overwhelming the system.

Expert Tip: Do not equate launch with completion. An AI MVP in production is still experimental. Treat it as a live learning environment where real data and usage patterns guide further refinement.

Step 8: Measure and Scale

Scaling should only happen when both business and technical metrics are stable. Expanding too early is one of the most common reasons AI MVPs fail.

What to measure:

Business KPIs: engagement, retention, revenue impact, cost savings.

AI metrics: accuracy, recall, precision, false positives/negatives, and drift over time.

Infrastructure KPIs: cloud costs, response times, ability to auto-scale.

Scaling approach:

Retrain models with larger datasets as usage grows.

Strengthen infrastructure with enterprise-grade cloud services.

Add integrations and features based on validated user demand.

Example: A healthcare AI MVP predicting patient readmissions should only scale once accuracy meets compliance standards and adoption rates among doctors are high. Scaling without these guardrails risks both cost overruns and reputational damage.

Expert Tip: Scaling is not just technical. It is about proving ROI. If the MVP shows measurable value for users and stakeholders, investment in scaling becomes justified.

By following this structured process, you avoid the common traps of over-engineering, poor data planning, or scaling too soon. Each step validates the product, the AI model, and the business case, ensuring your MVP grows into a reliable AI-powered solution.

You now have a clear picture of the build process, both for traditional and AI-powered products. The next question most founders face isn't how to build, but who should build it.

And that decision has more business impact than most people expect. Here's how to think through it.

Sourcing Model: In-House vs. Partial Outsource vs. Full Outsource

The process for building an MVP is only half the decision. The other half is who builds it and that choice affects timeline, cost, IP ownership, and what happens the day the MVP ships.

Here are the three models and when each is the right call.

Option 1: In-House Team

You hire the engineers, designers, and product people yourself. Full control, full ownership, full cost.

| Factor | Reality |

|---|---|

| Time to first line of code | 3–6 months (hiring pipeline, onboarding) |

| Annual cost (UK/US market) | £80,000–£120,000 per senior engineer |

| Control | Maximum |

| IP ownership | Clear from day one |

| Risk | High; wrong hire at MVP stage is expensive to undo |

| Best for | Funded companies building long-term technical teams |

The in-house model makes sense when you have Series A funding, a clear product vision, and the time to hire well. At pre-seed or seed stage, it typically burns too much runway before a line of product code is written.

Option 2: Partial Outsource (Staff Augmentation)

You keep a small internal team, typically a technical co-founder or lead and bring in specialist engineers to fill capability gaps or accelerate delivery.

| Factor | Reality |

|---|---|

| Time to start | 1–3 weeks |

| Cost | Lower than full in-house; variable by sprint or month |

| Control | Shared; your direction, external execution |

| IP ownership | Depends on contract; confirm before starting |

| Risk | Context gaps between internal and external team |

| Best for | Technical founders who need specialist depth (AI, mobile, infrastructure) |

This is the right model when you have strong internal product direction but need specific technical skills you don't have in-house. Hiring dedicated MVP developers on a sprint or monthly basis lets you scale capacity without the permanent salary commitment.

Option 3: Full Outsource (Product Engineering Partner)

You partner with an MVP development company that owns the full delivery — product discovery, architecture, design, development, QA, and launch. You stay involved on product decisions; they own the build.

| Factor | Reality |

|---|---|

| Time to start | 1–2 weeks |

| Fixed price range | USD 10,000 – 20,000 |

| Control | Outcome-based; you define what's built, partner decides how |

| IP ownership | Should transfer fully at completion (confirm in contract) |

| Risk | Quality depends heavily on partner selection |

| Best for | First-time founders, pre-seed to seed stage, founders without a CTO |

Full outsource is the fastest path from concept to production-ready MVP for founders without a technical team. The risk is partner quality — specifically whether the codebase you receive is documented, scalable, and owned entirely by you. For a transparent look at fixed-price engagement structures, see our MVP development pricing page.

| Your situation | Right model |

|---|---|

| Series A funding + time to hire | In-house |

| Technical co-founder + skill gaps | Partial outsource |

| Product vision, no technical team | Full outsource |

| Funded startup, need to move fast | Full outsource or partial |

| Enterprise team extending a product | Partial outsource |

One question that cuts through most of the deliberation: when the MVP ships, who understands the codebase well enough to own it going forward? If the answer is "only the people who built it," that's a dependency you'll pay to fix later.

For an independent comparison of how leading agencies structure their MVP delivery, see our breakdown of the top MVP development companies.

Once you've decided who's building, there are a handful of technical and commercial decisions that determine whether the MVP you ship is something you can scale from, or something you have to rebuild.

The next section covers the key considerations that catch founders off guard.

Key Considerations for Building an AI MVP

Building an AI MVP requires more than just following a checklist. The right strategy and early decisions can determine whether your MVP scales successfully or stalls after launch. Here are the factors you should prioritize:

1. Choosing the Right Development Partner

AI MVP development is complex. You need a team that understands both AI/ML models and product delivery.

Look for proven experience in MVP launches, domain-specific AI use cases, and the ability to balance speed with long-term scalability.

2. Budgeting and Resource Allocation

AI startup MVP development involves unique costs beyond coding. You need to plan for data acquisition, storage, annotation, cloud infrastructure, and talent such as AI engineers and product managers.

Misaligned budgeting often leads to delays or cutting critical features.

3. User-Centric Design

AI models only add value if outputs are usable. Prioritize design that delivers insights in clear, actionable formats.

Involve users early to test whether your MVP feels intuitive, and ensure explainability is built into the experience from the start.

4. Data Quality and Availability

AI for MVP development depends entirely on reliable data. Build with datasets that are representative, unbiased, and sufficient in size.

Poor data quality leads to poor predictions, which can undermine adoption before your product even has a chance to grow.

5. Model Selection and Scalability

Balance simplicity with future readiness. Start with models that are explainable and lightweight, but plan for scaling as datasets and usage grow.

Over-engineering early creates complexity, while under-preparing makes scaling costly later.

6. Security, Privacy, and Compliance

Protecting user data cannot wait until after launch. Address GDPR, HIPAA, or industry-specific compliance from the MVP stage.

Secure infrastructure and privacy-first design reduce the risk of rework and build early trust with users and stakeholders.

7. Integration with Existing Systems

For enterprise adoption, your MVP must work seamlessly with existing tools like CRMs, ERPs, or marketing platforms.

Testing integrations early avoids expensive surprises later and increases the chance of faster adoption within established workflows.

8. Monitoring and Feedback Loops

AI models degrade over time due to drift. Build monitoring into your MVP from the beginning.

Track performance metrics continuously and create a feedback loop for retraining models so accuracy improves as usage grows.

9. Team Alignment and Governance

AI-powered MVP development requires collaboration between product managers, data scientists, engineers, and designers.

Clear governance ensures accountability, reduces miscommunication, and keeps technical decisions aligned with business goals.

These considerations often determine whether your MVP becomes a foundation for growth or an expensive experiment. Addressing them upfront ensures that the benefits of AI in MVP development translate into real, long-term value for your business.

With a clear understanding of the build approach and the decisions that matter most, the remaining practical question is cost.

Here's an honest breakdown of what MVP development actually costs for both traditional and AI-powered products.

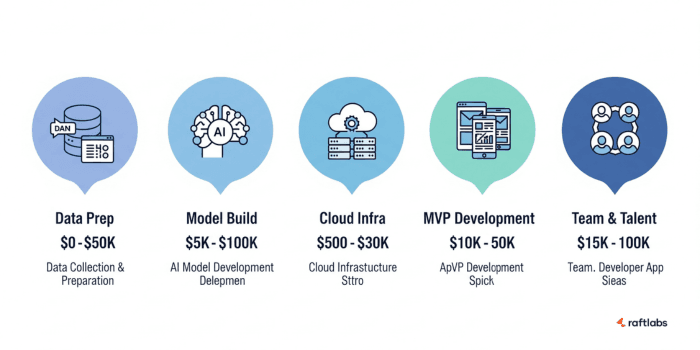

How Much Does It Cost to Develop an AI MVP?

The cost of AI MVP development depends on multiple factors such as data, model complexity, infrastructure, and team composition.

Below is a detailed breakdown of the major cost drivers with realistic ranges.

1. Data Collection and Preparation ($0 – $50,000)

Your AI MVP is only as strong as the data it learns from. Costs vary depending on whether you use freely available datasets, collect your own, or purchase specialized data.

Open-Source or Public Datasets (Free – Minimal Cost)

If existing open datasets meet your needs, you can reduce upfront costs significantly. This is common in natural language processing or image recognition use cases where large public datasets are already available.

Manual Data Collection and Labelling ($2,000 – $20,000)

When open data is insufficient, teams often build small datasets manually. This may involve annotating text, labeling images, or transcribing audio. Costs rise with dataset size and labeling accuracy requirements.

Proprietary or Industry-Specific Datasets ($10,000 – $50,000)

For niche applications such as healthcare diagnostics or financial modeling, data must be purchased from specialized providers. These datasets are more accurate but come at a premium cost.

2. AI Model Development ($5,000 – $100,000)

The complexity of your AI model has the largest impact on cost. Simple models can be deployed quickly, while custom algorithms require significant resources and experimentation.

Basic Rule-Based Systems ($5,000 – $10,000)

These rely on predefined rules instead of machine learning. They are inexpensive but limited in capability, suitable only for simple automation or early proof-of-concepts.

Pre-Trained Models with Fine-Tuning ($10,000 – $30,000)

Using AI APIs or pre-trained models like GPT, BERT, or Google Vision reduces time and cost. Moderate fine-tuning on your dataset adds flexibility while keeping expenses controlled.

Custom machine learning models ($30,000 – $100,000)

Built from scratch for your business needs, these require large datasets, training cycles, and experimentation. They deliver the most value but are resource-intensive and demand specialized talent.

3. Cloud Infrastructure and Computing ($500 – $30,000)

AI MVPs require scalable infrastructure for training, testing, and deployment. Costs vary depending on load, redundancy, and compliance requirements.

Basic development servers ($500 – $5,000)

Suitable for small prototypes or local testing environments. These servers are cost-efficient but cannot handle production-level AI workloads.

Cloud AI platforms ($5,000 – $20,000)

Platforms like AWS, Azure, or GCP provide pay-as-you-go options for model training and inference. Costs scale with usage but offer flexibility for MVP development.

Enterprise-grade infrastructure ($20,000 – $30,000)

For MVPs expected to scale quickly or handle sensitive data, enterprise cloud setups with redundancy and compliance features are necessary. These come with higher recurring costs.

4. MVP Development (Frontend & Backend) ($10,000 – $50,000)

Interfaces, APIs, and user-facing applications bring the AI functionality to life. The scope of your frontend and backend impacts cost significantly.

Simple web app or API ($10,000 – $20,000)

A minimal interface with basic UI or APIs is often sufficient to validate the AI’s core functionality. This is common for early-stage MVPs.

Mobile apps or interactive dashboards ($20,000 – $50,000)

If your MVP requires richer experiences such as mobile apps, dashboards, or advanced visualizations, costs increase. These features improve usability but require additional development hours.

5. Team and Talent Costs ($15,000 – $100,000)

The expertise of your team is one of the largest cost factors in AI MVP development. Rates depend on skill level, region, and whether you hire in-house MVP developers or work with agencies.

Freelancers or agencies (Lower cost range)

Working with freelancers or specialized agencies provides flexibility and keeps costs lower. This is often the choice for startups seeking rapid prototyping.

Specialized talent (Higher cost range)

Hiring top-tier in-house experts significantly increases costs. AI/ML engineers typically charge $80–$200 per hour, backend developers $60–$150 per hour, frontend developers $50–$120 per hour, and data scientists $90–$200 per hour.

Tools and Technologies for AI MVP Development

Choosing the right tools can determine whether your AI MVP is built quickly, tested effectively, and scaled smoothly.

The stack you select should balance speed, cost-efficiency, and long-term maintainability. Here are the key categories to focus on:

1. AI/ML frameworks

Frameworks are the foundation of any AI application development. TensorFlow and PyTorch dominate the market for deep learning tasks, while Scikit-learn works well for classical machine learning models.

Keras simplifies neural network prototyping, and Hugging Face accelerates natural language processing projects with pre-trained models. The right choice depends on your use case and data complexity.

2. Rapid prototyping frameworks

Your MVP needs a functional interface and backend to showcase the AI model. For fast development, tools like ReactJS (frontend), Django or FastAPI (backend), and Streamlit (AI dashboards) are highly effective.

In early AI startup MVP development, no-code tools like Bubble or Zapier can reduce time-to-market when testing ideas before investing in full builds.

3. Data tools

AI models succeed or fail based on data readiness. Pandas and NumPy remain essential for data cleaning and analysis.

For labeling and annotation, tools like Label Studio or Amazon SageMaker Ground Truth streamline dataset preparation.

These tools reduce manual overhead while ensuring your training data is consistent and reliable.

4. Cloud platforms

Scalable infrastructure is critical in AI-powered MVP development. AWS SageMaker, Google Vertex AI, and Azure ML provide managed environments for training, deploying, and monitoring AI models.

These platforms also support compliance, monitoring, and auto-scaling, making them ideal for teams looking to move from prototype to production.

5. Collaboration and version control

Beyond frameworks, your team needs strong workflows. GitHub or GitLab provide version control for code and models.

MLflow or Weights & Biases help track experiments, metrics, and reproducibility, all essential for scaling AI MVPs responsibly.

Selecting the right combination of AI tools for MVP development is not about picking the most popular names. It’s about choosing tools that align with your budget, team skillset, and long-term product roadmap.

With the right technology stack in place, your MVP moves faster from hypothesis to market validation while keeping technical debt under control.

Challenges in AI MVP Development

AI MVP development offers speed and validation benefits, but it also brings unique challenges that traditional MVPs rarely face.

Addressing these early helps you avoid wasted investment and ensures your MVP delivers measurable outcomes.

1. Data Quality and Availability

The strength of your AI model depends on the quality of your data. Many AI startups struggle with datasets that are too small, incomplete, or biased. Poor data quality leads to inaccurate predictions, which can quickly undermine user trust in your MVP.

2. Overestimating AI Capabilities

A common mistake in custom MVP development with AI is over-engineering. Early-stage models are rarely perfect. If your MVP promises more than the AI can deliver, users will disengage. Starting small and setting realistic expectations is critical.

3. High Costs at Scale

While prototyping may be affordable, costs often rise when scaling. Training, retraining, and serving models on cloud platforms can become expensive if not optimized. Without careful planning, infrastructure expenses can outweigh the business value delivered.

4. Model Drift and Ongoing Maintenance

AI is not a one-time build. User behaviour, external factors, and data patterns change over time.

This causes model drift, where predictions lose accuracy. Continuous monitoring, retraining cycles, and maintenance pipelines are essential for long-term reliability.

5. Compliance and Ethical Risks

Regulatory requirements such as GDPR or HIPAA compliance, add complexity to AI-powered MVP development.

Beyond compliance, ethical concerns like bias or “black box” decisions can damage reputation. Building explainability and fairness into your MVP from day one is non-negotiable.

6. Integration with Existing Systems

An AI MVP rarely exists in isolation. Enterprises expect seamless integration with CRMs, ERPs, or data pipelines. If integration is ignored during development, adoption becomes difficult, and your MVP risks being sidelined despite strong AI performance.

AI MVP development is rewarding but unforgiving if these challenges are overlooked. Success comes from treating your MVP not just as a product experiment but as the foundation of a scalable, responsible AI solution.

How RaftLabs Helps Startups Build and Scale an AI MVP

Bringing an AI MVP involves balancing speed, model accuracy, infrastructure reliability, and user adoption. Many startups struggle with these challenges, which is where our experience comes in.

1. Tailored AI MVP Development

Every product has unique needs. We focus on building lean but powerful AI models that solve the core problem from day one.

Instead of overloading the MVP with unnecessary features, we refine core AI functionality, ensuring it performs consistently across varied datasets and scenarios.

2. Scalability from the Start

One of the biggest pitfalls in AI startup MVP development is building something that works in pilots but fails under real-world load.

We design cloud-first architectures, optimize infrastructure, and integrate auto-scaling so your MVP can handle growing data volumes and user demand seamlessly.

3. Data-Driven Decision Support

Scaling too early is a common risk. We help you avoid wasted spend by analyzing critical signals like model performance, user engagement, and retention before expanding. If the AI is underperforming, we refine it first to ensure resources are invested wisely.

4. End-to-End Technical Expertise

Our team brings expertise in AI tools for MVP development, backend engineering, frontend design, and cloud optimization. This cross-functional capability ensures that your AI-powered MVP is not only functional but also user-friendly, secure, and reliable.

5. Proven Track Record With Startups

We’ve partnered with startups across industries to take their AI ideas from concept to market-ready MVP. Our approach reduces risk, accelerates time-to-market, and sets a strong foundation for scaling into enterprise-ready solutions.

Our goal is simple. To ensure your AI MVP is not just built but built to last. With the right strategy, technology stack, and product mindset, we help you validate faster, scale smarter, and grow stronger in competitive markets.

Real-World Case Studies

1. AI MVP for Conversational AI Chatbot for SaaS

We developed a conversational AI chatbot MVP that transformed static customer interview and feedback forms into dynamic, interactive conversations.

This solution enabled businesses to collect more insightful data, enhance user engagement, and streamline feedback processes. The MVP was designed with scalability in mind, allowing for easy integration into existing platforms and future expansion.

2. AI MVP for Remote Patient Monitoring App for Chronic Care

In the healthcare sector, RaftLabs created an AI-driven remote patient monitoring app tailored for chronic disease management.

The MVP utilized real-time data analytics to provide healthcare providers with actionable insights, improving patient outcomes and reducing hospital readmissions. The app was designed to be HIPAA-compliant, ensuring the privacy and security of patient data.

Conclusion

Building an AI MVP is not just about proving an idea. It’s about validating data strategies, testing AI models in real-world conditions, and preparing for scalability from day one. Done right, an AI MVP gives you faster market validation, reduced risk, and a clear roadmap for growth.

From the product discovery stage to selecting the right technology stack, every decision shapes whether your MVP becomes a stepping stone or a stumbling block.

Startups, enterprises, and agencies that approach AI MVP development with a structured plan gain a competitive edge by learning faster and scaling smarter.

At RaftLabs, we specialize in guiding teams through this journey. Our experience in custom MVP development with AI ensures your product is lean, reliable, and future-ready.

If you’re planning to build an AI MVP, let’s talk about how we can help you launch faster and scale with confidence.

Frequently Asked Questions

- An MVP (Minimum Viable Product) is the smallest, most focused version of a software product that can be released to real users to test one core assumption: does this solve a real problem for a real person? It's actually a production-ready code that real users interact with, generating real behavioral data. That data tells you whether your core hypothesis holds, before you've committed 12 months and $200,000 to a full product. An MVP typically includes the core user workflow that delivers the primary value, basic user accounts, a minimal admin panel, and only the integrations the product literally cannot function without. Everything else is version two. The word "minimum" refers to scope, not quality. The MVP codebase is the foundation of everything you'll scale. Build it like it matters.

- A prototype simulates the product. An MVP is the product. A prototype has no real backend. It's a clickable mockup or interactive design used to test a concept, validate a UX flow, or pitch investors before committing to a build. It typically takes 1–3 weeks and costs $2,000–$8,000. The users are your team and investors, not real customers. An MVP is working, deployable software. Real users interact with it. Real data comes back. It answers the question a prototype can't: will users actually use this, keep using it, and pay for it? The practical rule: if your biggest uncertainty is "will users want this?", build a prototype first. If it's "will users keep coming back?", build the MVP. Treating a prototype as an MVP is one of the most common early-stage mistakes. You ship something that can't handle production load to real users and discover the architecture is wrong when it's most expensive to fix. Use a prototype when you're pre-seed, need investor validation, or are technically uncertain about feasibility. Move to an MVP when you have evidence that the concept works and need behavioral data from real usage.

- Only the features required to test your core hypothesis. Nothing else. The practical minimum for most software MVPs: The core user workflow — the single thing that delivers the primary value and is the reason someone would come back. If this doesn't work well, nothing else matters. User authentication and accounts — users need accounts to generate the longitudinal data that validates retention and engagement. A basic admin panel — your team needs visibility into what users are doing and the ability to manage the product without developer involvement. Essential integrations only — the ones the product literally cannot function without. Stripe if it's a paid product. The CRM if user data needs to sync. Nothing aspirational. Everything else belongs in version two: advanced analytics dashboards, notification customization, secondary user roles, additional integrations, gamification, and any feature that isn't directly required to test the hypothesis.

- Timelines usually range between 1–2 months, depending on the complexity of the model and data availability. Projects with existing, clean datasets and simpler models move faster. More complex builds, requiring large-scale data preparation or custom AI algorithms, may take longer. Early validation and prototyping can shorten the cycle significantly.

- Most AI MVP development projects cost between $10,000 and $60,000. Costs vary based on factors such as dataset preparation, choice of AI frameworks, infrastructure needs, and the size of the development team. Using pre-trained models or no-code AI tools can reduce costs, while custom-built models, compliance requirements, or enterprise-grade infrastructure drive costs upward.

- Several challenges tend to appear repeatedly across projects: Data quality and availability – AI outcomes depend on representative and unbiased data. Poor data slows progress and limits accuracy. Overbuilding features – Adding too many features early leads to delays and overspending. A lean approach focused on must-haves works best. Runaway infrastructure costs – Cloud and GPU expenses can escalate quickly without monitoring and usage controls in place. Compliance and ethical risks – AI projects face scrutiny around bias, explainability, and regulations like GDPR or HIPAA. Addressing this at the MVP stage avoids costly rework. Integration with workflows – Even well-trained AI models fail if they do not fit into existing user processes. Designing for usability and adoption is as important as technical accuracy.

- AI MVPs bring the most value in industries that generate large amounts of data or rely heavily on process efficiency. These sectors gain measurable ROI even at the MVP stage. Key industries include: Healthcare – predictive diagnostics, patient triage, medical imaging Fintech – fraud detection, credit scoring, trading algorithms Retail & eCommerce – recommendation engines, demand forecasting, customer support Logistics & Supply Chain – route optimization, inventory management Digital Marketing – personalization, ad targeting, campaign automation

- Scaling is not just about technical readiness. It requires validation across business, technical, and user adoption metrics. Expanding too early creates high costs with little return. Checklist before scaling: Consistent user engagement and retention AI models delivering reliable accuracy and stability Infrastructure capable of handling larger data and workloads Compliance and security requirements already in place Clear evidence of ROI or market demand

- It depends on whether you have a technical co-founder and what your timeline looks like. Build in-house if you have a technical co-founder who can lead the architecture and manage developers, you're hiring for the long term, and the product is strategic enough to warrant a permanent internal team from day one. A minimum viable in-house team in the US costs $400,000–$600,000/year in salaries before benefits or overhead, and takes 3–4 months to hire and onboard before a sprint even begins. Outsource to a product studio if you're a non-technical founder who needs to move fast, if you don't have a technical leader on your founding team, or if you need a validated MVP before you can justify building an internal engineering function. A qualified product studio delivers a production-ready MVP in 8–12 weeks, the same output that would take 6+ months to staff and build in-house from scratch. The mistake most founders make: trying to build in-house without a technical co-founder. They hire a single developer, discover they can't evaluate the work, architecture decisions get made under pressure, and the codebase becomes expensive to maintain or scale. If you're in that position, outsourcing to a studio with verifiable production case studies is almost always faster and cheaper.